When you can’t deploy on demand, you’ve lost control of your software. Risk accumulates in unreleased code, and the more changes you store in one place, the more chance they have of triggering overheads, rework, and failures. When you have blockers stopping you from going live, you’ll start to accumulate dangerously high levels of risk unless you prioritize deployability.

By choosing to do work that returns software to a deployable state ahead of any other kinds of work, like feature development, you’ll avoid the toxicity of tangled work batches. Instead, you can smoothly flow changes between test and production and get the feedback you need to be confident that your software works.

Crucially, if you discover a high-severity bug or security problem, there are no roadblocks to getting fixes into production. You don’t need a special “expedited lane” for these changes, which means you don’t skip steps that let bad changes into your codebase.

Let’s take a look at problem indicators that will help you identify and fix common deployability issues. The goal of answering the deployability question is a mile marker, not the destination, but if you can’t answer the question, you’re going to waste time looking for landmarks!

Watch the episode

You can watch the episode below, or read on to find some of the key discussion points.

The awkward silence problem

When you ask your team, “Are we ready to deploy?”, the correct answer is either “absolutely” or “no way”. The worst possible answer is silence or confusion. If the team doesn’t know whether they can deploy, they’re missing the deployment pipeline that would generate the answer.

When you commit a code change, build errors should be returned to you within a couple of minutes. At the end of 5 minutes from your commit, a suite of fast-running tests should tell you if you’ve broken functionality or the quality attributes of your application. Dependency checks, code scanning, and other static analysis tools should have told you if you have a problem. If you have long-running tests or tests that depend on the new software version being deployed to a test environment, you should know in another 5-20 minutes if they’ve detected a problem.

After all these checks, you should have a version of your software running in a test environment that you have high confidence in. If someone asked you whether you’re ready to deploy, you’d answer “absolutely”.

Deployment automation makes sure the deployment is a non-event. It guarantees the same process is used to deploy to all environments, and makes it trivial to deploy on demand. A solid deployment pipeline contains all the checks you need to know whether you can deploy.

Manual testing is a ceiling, not a floor

Teams often treat manual testing as a foundation for verifying that a software version works. In reality, it acts more like a ceiling, limiting your ability to flow changes to production. As your software becomes more complex, the ceiling descends as the testing takes longer.

Long test cycles make you subvert your process to the speed at which you can test. You’ll notice when this happens because you’ll keep looping back to fix bugs and restart the test process. From the earliest change you make, through each bug list and all the re-testing, right through to the final software version, you are not deployable.

Automating your tests is the real foundation for your software. This raises the ceiling and moves the constraint away from the test cycle.

Your old code wasn’t written to be tested

If your application is successful, it will have some history. Part of that history is often that it wasn’t written with test automation in mind. That means you need to identify where your risk is, work out how to find the seams that will make it testable, and start adding characterizing tests.

Once some old code is wrapped with tests, it becomes far easier to change the code design, because the tests will fail if you break something.

Automation is living documentation

When developers move on, a portion of your institutional knowledge goes with them. High-quality documentation can help teams distribute this knowledge and reduce its loss, and the best kind of documentation is test automation.

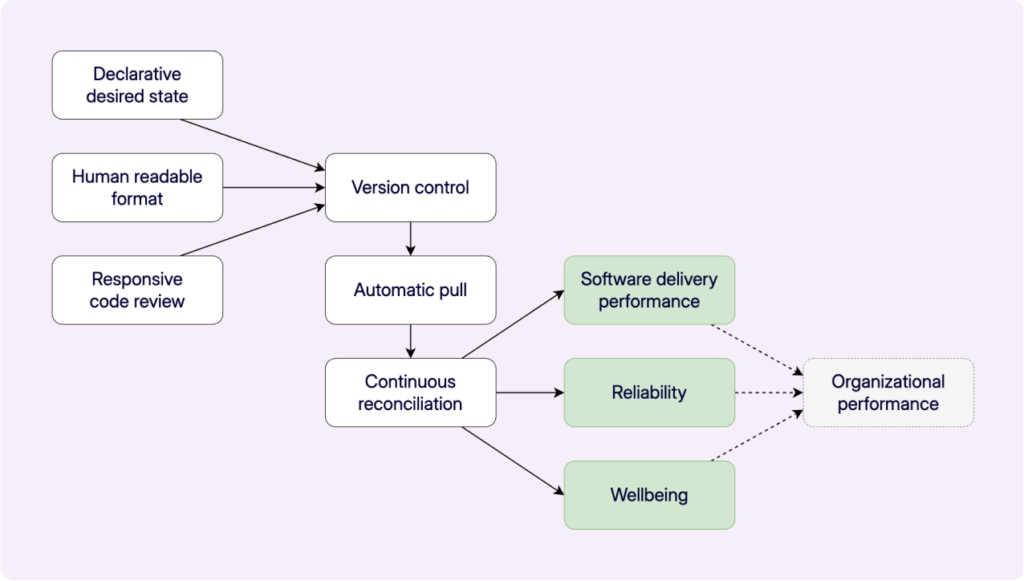

Well-written automation, like tests, deployment automation, and infrastructure as code, performs useful functions while effortlessly documenting them. Because you make all changes through the living documentation, it is always up to date.

The hidden cost of undocumented knowledge becomes painfully clear when you have to deploy without the person who normally handles it. You follow their checklist carefully, confirm every step, and everything looks right; yet the deployment still fails. What you didn’t know is that the checklist stopped being accurate months ago, because the person doing the deployments stopped consulting it. All the new steps they introduced lived in their head, not on paper.

The living documentation built into automation tools is especially valuable when onboarding new developers. Rather than relying on tribal knowledge passed down through conversations and shadowing, a new team member can read the test suite and understand not just what the software does, but what it’s supposed to do and why certain behaviors matter. That’s documentation that keeps pace with the code because it is the code.

The value of long-term sustainability

Like its Agile predecessors, Continuous Delivery values long-term sustainability. That means you invest a little more effort up front to constrain maintenance costs over the long term. Writing tests may mean a feature takes 20-25% more time to implement, but the defect density can be 91% lower than similar features not guided by tests (Microsoft VS).

You could reduce 40 hours of bug fixing to just 3.6 hours by guiding feature development with tests, and you also save on other overheads caused by escaped bugs, like reputational damage, customer churn, support costs, pinpointing and debugging, test cycles, and feature delay.

Conclusion

While it takes some effort to set up a strong deployment pipeline, knowing whether a software version is deployable pays dividends. Technical practices like test-driven development and pair programming are needed to keep software economically viable in the long term, even though they require a little more effort up front.

If you can’t answer the question “Is your software deployable?”, you’re sure to run into trouble.